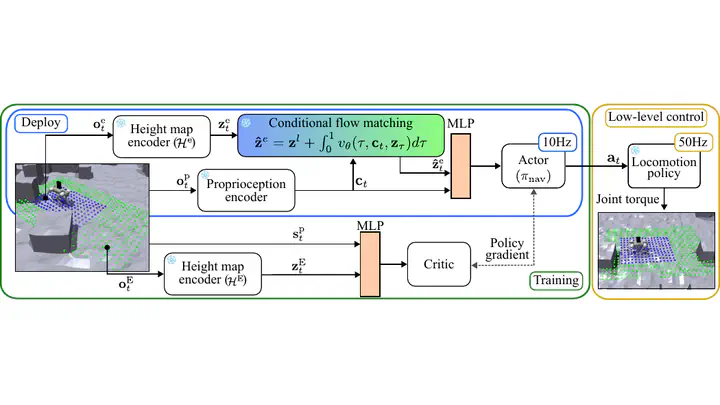

DreamFlow: Local Navigation Beyond Observation via Conditional Flow Matching in the Latent Space

Abstract

We present DreamFlow, a deep reinforcement learning framework for robot navigation in obstacle-dense environments. Conventional planners and perception systems are constrained by the robot’s immediate sensor range, leading to navigation failures in cluttered environments. DreamFlow addresses this limitation by employing conditional flow matching to extend the robot’s perceptual range through probabilistic mapping between local height map representations and broader spatial features. This enables robots to anticipate obstacles beyond their immediate field of view and proactively circumvent potential navigation failures. We validate our approach in both simulated maze and hallway scenarios and real-world environments using a Unitree Go2 quadrupedal robot equipped with dual LiDAR sensors, demonstrating superior performance in latent space prediction accuracy and navigation tasks compared to existing methods.